Russian and Chinese interference networks are ‘building audiences’ ahead of 2024, warns Meta

Foreign interference groups are attempting to build and reach online audiences ahead of a number of significant elections next year, “and we need to remain alert,” Meta warned on Thursday.

National elections are set to be held in the United States, United Kingdom and India — three of the world’s largest economies — as well as in a number of countries that have previously been targeted by foreign interference, including Taiwan and Moldova.

In the company’s latest adversarial threat report, Meta released findings on three separate influence operations — two from China and one from Russia — that it had recently disrupted, as well as setting out the challenges posted by generative artificial intelligence.

The Chinese campaigns primarily targeted India and the Tibet region, as well as the United States. The Russian campaign — linked to employees of state-controlled media entity RT — focused on criticizing U.S. President Joe Biden for his support of Ukraine, and French President Emmanuel Macron for France’s activity in West Africa.

The social media giant first began to publish reports about what it calls coordinated inauthentic behavior (CIB) following the Russian interference in the U.S. presidential election of 2016, something for which social media companies were called to account for by multiple Congress committees.

But attempts to respond to this interference have been controversial. Thomas Rid — a professor of Strategic Studies at Johns Hopkins — has argued that the decision by Democrats to release divisive material spread online during the 2016 election did more damage to public trust than the original posts themselves.

“During previous US election cycles, we identified Russian and Iranian operations that claimed they were running campaigns that were big enough to sway election results, when the evidence showed that they were small and ineffective,” stated Meta’s new report.

So-called “perception hacking” has become a key component of interference campaigns, attempting “to sow doubt in democratic processes or in the very concept of ‘facts’ without the threat actors having to impact the process itself.”

A defense against this is “fact-based, routine and predictable threat reporting,” the company said, stressing that its work since 2017 “has shown that the existence of CIB campaigns does not automatically mean they are successful.”

Allegations of political censorship have also prompted controversy. Back in July, U.S. federal agencies were given an injunction preventing intelligence sharing with social media companies by a district judge in Louisiana, following a lawsuit by two Republican attorneys general alleging conservative views were being censored online.

Meta said such sharing has “proven critical to identifying and disrupting foreign interference early, ahead of elections.”

Speaking to journalists on Wednesday the company’s global threat intelligence lead, Ben Nimmo, confirmed: “While information exchange continues with experts across our industry and civil society, threat sharing by the federal government in the U.S. related to foreign election interference has been paused since July.”

Image: Meta

Chinese interference

In its threat report, Meta announced it had removed thousands of Facebook accounts that, despite being operated from China, posed as Americans to post about U.S. politics and relations between Washington and Beijing.

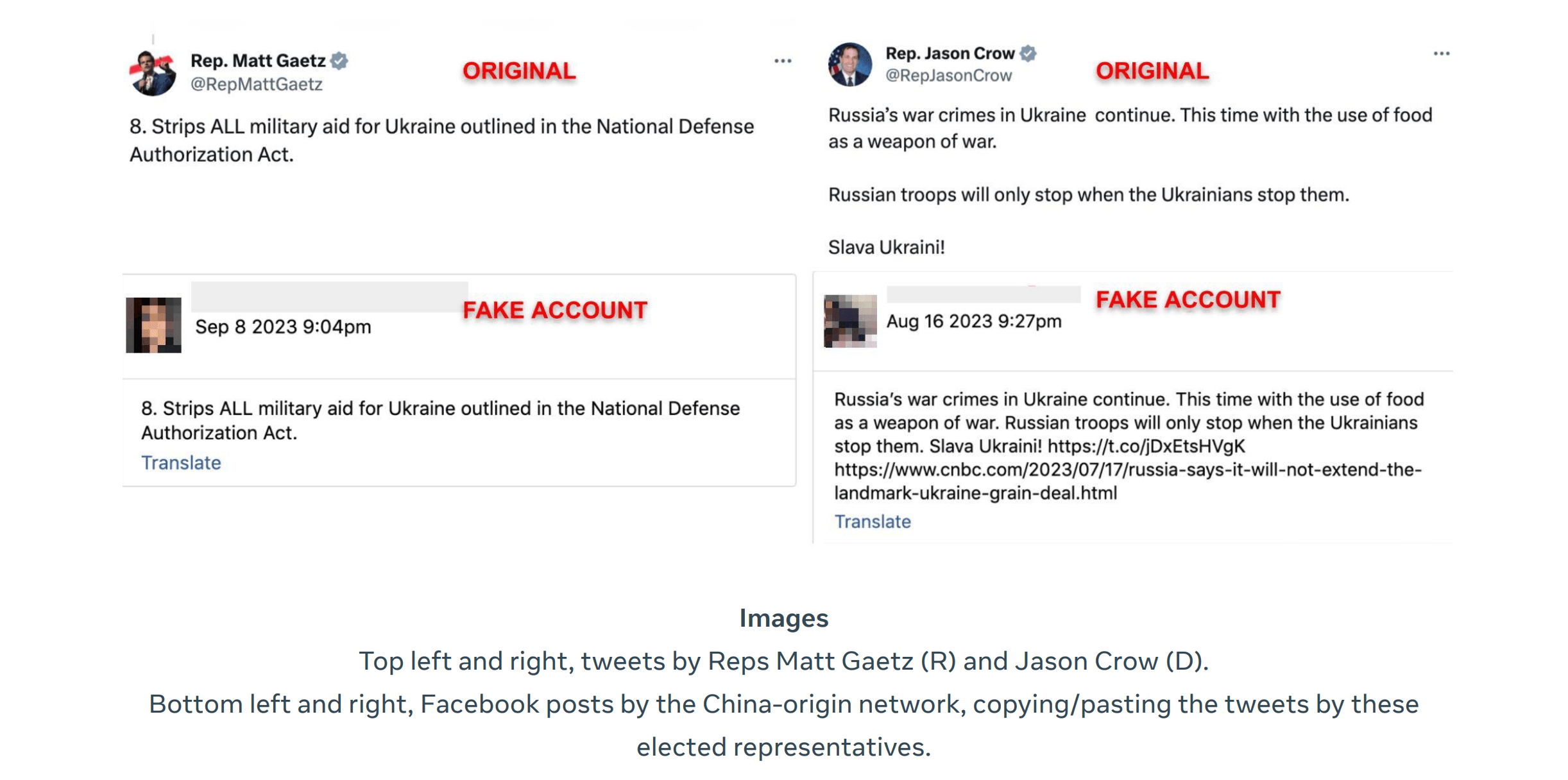

“The same accounts would criticize both sides of the US political spectrum by using what appears to be copy-pasted partisan content from people on X [formerly Twitter],” stated the company’s report.

Samples of messages shared by Meta included comments by the House of Representatives members Matt Gaetz and Andy Biggs, both Republicans, alongside Josh Gottheimer and Jason Crow, both Democrats.

“It’s unclear whether this approach was designed to amplify partisan tensions, build audiences among these politicians’ supporters, or to make fake accounts sharing authentic content appear more genuine,” wrote Meta.

Nimmo told journalists: “As election campaigns ramp up, we should expect foreign influence operations to try and leverage authentic parties and debates rather than creating original content themselves.”

In the other Chinese campaign primarily targeting India and the Tibet region, Meta removed 13 accounts and seven groups operating fictitious personas on both Facebook and X “posing as journalists, lawyers and human-rights activists” to defend China’s human rights record and to spread allegations of corruption about Tibetan and Indian leaders.

“China is now the third most common geographical source of foreign CIB that we’ve disrupted after Russia and Iran,” said Nimmo, noting that “overall these networks are still struggling to build audiences, but they’re a warning.”

Russian interference

Russia is set to host its own presidential election in 2024, although few international observers believe it will be truly contested. Elections among its adversaries — particularly those who are vocally supporting Ukraine — are likely to be targeted by the country’s apparatus for interference.

“Russia remains the world’s most prolific geographic source of CIB,” said Nimmo, who said that “for nearly two years since its full-scale invasion of Ukraine, Russia-based covert influence operations have focused primarily on undermining international support for Ukraine.”

Meta removed six Facebook accounts and three Instagram accounts that it said originated in Russia and were linked to employees of RT, the state-controlled media business, that targeted English-speaking audiences.

The campaign attempted to create what it presented as independent, grassroots news entities that had presences on multiple platforms including Telegram, TikTok and YouTube, communicating in English primarily about Russia’s invasion of Ukraine, “accusing Ukraine of war crimes and Western countries of ‘russophobia’,” according to Meta.

The company, which had previously identified a Russian disinformation campaign that created fake articles masquerading as legitimate stories from The Washington Post and Fox News, said it had found a new cluster of websites connected to the same campaign.

“These sites focus directly on US and European politics,” said Meta, with names such as “Lies of Wall Street”, “Spicy Conspiracy”, “Le Belligerent” in France and “Der Leistern” in Germany, but the websites haven’t received “much amplification by authentic audiences,” according to Meta.

Recorded Future

Intelligence Cloud.

No previous article

No new articles